Two things to get out here first: 1. I can’t believe I’m using so many Microsoft products and 2. I really dislike SharePoint (I’d say hate, but I’m trying to keep positive here).

In this particular instance, there was a size/performance limitation of a SharePoint site. The owner of the data wanted all of the data copied off, with the metadata, so files could be deleted to keep the performance up. Due to some contractual issues, the admins of the site would not do any work beyond creating a user, they also would not give elevated privileges to the site. That means the obvious things (like ShareGate) were out of the picture.

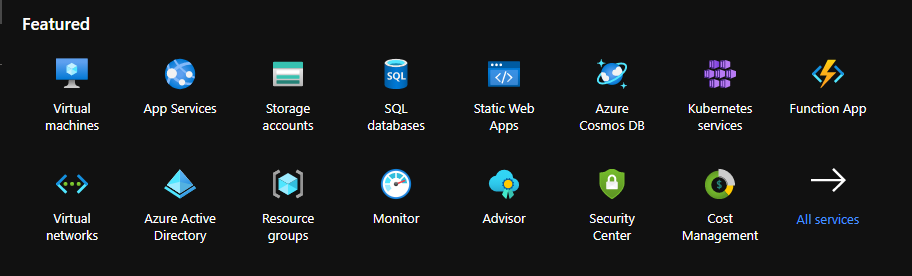

The basics of the solution I designed: store the data in an Azure File Share. Store the metadata in CosmosDB. After working through a few corner cases, it worked! Today, I’m mass extracting some data and metadata for the first time.

Getting the Data and Metadata from SharePoint

While I can’t share code, I can go over the steps.

The archiver lived on a Ubuntu VM. We used a Standard D4s instance, only because we needed 16 GiB of memory to handle some of the files. I wrote a proof of concept script in Python using the sharepoint Python module. You will have to play around with the different libraries as some only seem to work against certain versions of SharePoint.

The archiver accesses the list within SharePoint and iterates through each item. The file is downloaded from SharePoint then uploaded to to Azure File Share in a directory named for the library that the file was in. We then convert the metadata into JSON and add an ‘id’ property that consists of ‘{library name}—{file name}’. That JSON data is then sent to CosmosDB.

At this point, the data is stored and the metadata is stored and searchable.

Search

For accessing the files, we created a simple Flask base web app that searches the LinkFileName property in the metadata. The app shows some basic data (size, last modified) in the results. Clicking on an item will show all of the metadata. This is very rudimentary but meet the needs of the project.

Mass Extraction

The project using this data is coming to an end and they need to hand off a certain subset of documents. During the project, they had identified these in the metadata. Our approach made this extremely easy accomplish. A simple query to the CosmosDB returns the meta data from the matched items. From that I get the URL in the Azure File Share, download the file, then write the metadata to a JSON file. The extractor is only 70 lines of code, a big part of that is just reading in all of the environment variables.

The Right Way?

Short answer, “No.” The best way to approach this would have been through a proper tool. Especially since this data is most likely just going to be imported into a newer SharePoint site. After all of this someone (who is not me) is still going to have to take all of these files and they JSON files containing the meta data and write something to import that into the newer SharePoint site. This is all a bit of a waste of time, but it did protect the data and the customer. To that end, it’s a success!